The Center of Excellence Model (CoE) for Distributed AI Innovation

To enable businesses to build autonomous workflows rapidly, without compromising enterprise security or ethical standards.

2/16/20262 min read

As organizations accelerate AI adoption, they often face a structural dilemma—how to scale innovation across business units without losing control over ethics, quality, and alignment. This challenge is amplified as companies increasingly empower citizen developers—non-IT staff using low-code/no-code tools—to build and adapt AI agents. While this democratizes innovation and unlocks domain-driven value, it also introduces significant governance complexities. Robust frameworks are essential to balance agility with risk control, ensuring that AI-powered solutions developed at the edge remain secure, compliant, and strategically aligned—particularly under evolving regulatory mandates like NIS2 and the upcoming EU AI Act.

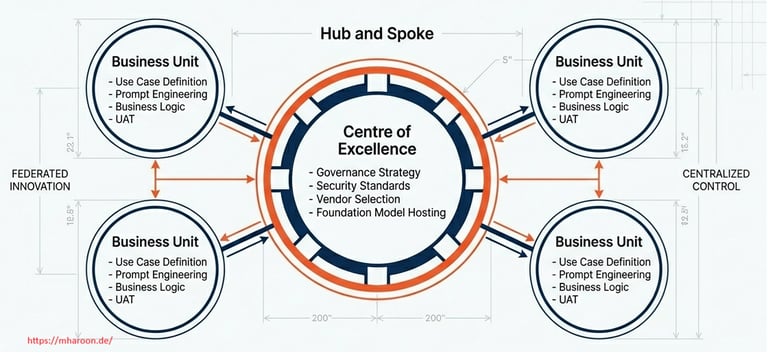

To manage AI development across an enterprise without stifling local innovation, a possible path for organizations is to adopt a hub-and-spoke model. In this structure, a central hub (often an AI CoE or “AI Office”) defines governance and provides shared resources, while distributed spokes embedded in business units drive implementation on the ground. This federated approach prevents the central team from becoming a bottleneck or losing touch with frontline needs. Each spoke (business unit pod) can experiment and deliver AI solutions rapidly, informed by deep domain knowledge, while the hub ensures they all adhere to common standards, ethics, and security practices. In effect, the governance is “loose-tight” – loose in allowing flexibility for local implementation, but tight in enforcing core principles and policies enterprise-wide.

Key responsibilities are split between the hub and spokes. The central AI CoE (hub) acts as the “nerve center” for governance. It develops and maintains standards, frameworks, and templates applicable across all AI initiatives. For example, the hub might publish principle-based guidelines on algorithmic ethics, template risk assessment checklists, and standardized approval workflows for deploying an AI agent. It also provides centralized oversight and risk management – maintaining an inventory of all AI use cases in flight, monitoring systemic risks (e.g. interactions between multiple AI systems), and reporting on AI progress and issues to senior leadership.

Another critical hub function is capability building and knowledge sharing. The CoE can run training programs, internal AI forums or “communities of practice,” and maintain repositories of best practices and reusable assets (code, pre-trained models, test datasets) for everyone to reuse. Finally, the central hub coordinates strategy: ensuring that AI efforts in different business units remain aligned with overall enterprise objectives and identifying synergies (e.g. one refinery’s predictive maintenance model could be reused at another plant).

Crucially, even in a federated model, central oversight is not abdicated. The hub maintains line-of-sight to all AI activities via regular check-ins, enterprise dashboards, and cross-functional councils.The CoE might also institute gating processes: e.g. any AI agent that will interface with critical control systems must undergo a central review and pen-test before deployment.

As enterprises empower citizen developers to build AI agents, especially in complex and regulated sectors like energy, the challenge is no longer whether innovation can happen—but whether it can scale responsibly. The hub-and-spoke governance model offers a pragmatic solution: it decentralizes innovation while centralizing accountability. By combining the agility of domain-led experimentation with the discipline of centralized oversight, organizations can foster a culture of safe, compliant, and high-impact AI development.